College Scorecard Earning Updates: What you should know and how to think about it.

A big part of the decision of what college to go to is framed around whether or not you'll make good money coming out of it, or whether you'll get a good return on investment for your troubles. We've talked about this extensively this year — why the median ROI hides a harsher reality, what graduation rates actually count, and how to build a complete financial picture before you enroll — because how you think about return on investment is not as straightforward or as simple as people make it.

In this post we're going to talk about the College Scorecard 2026 update from last week, what changed in the earnings methodology, and what you should take away from it — if anything. Over the next couple of weeks we'll be rolling out methodology changes to our database, and this should be seen as something of a primer on what is now in the Scorecard and how we are beginning to think about it.

What changed, and why

The previous benchmark used to evaluate institutions was the 10-year post-entrance metric, a measure of the long-term earnings of all individuals who entered an institution, not just those who graduated. As I explored in this piece on graduation rates it helped give a picture of the full population: completers, non-completers, and everyone in between.

What's there now is different in a few important ways. The March 2026 update refreshed the 4-year exit-cohort earnings measuring earnings of completers only (students who finished their degree, were earning income, and were no longer enrolled) 4 years after graduation and added new state and national comparison benchmarks alongside it.

The shift reflects a broader policy direction. The One Big Beautiful Bill Act established a legislative accountability framework using 4-year post-completion earnings as its standard, and the negotiated rulemaking process that preceded it had been pushing toward completers-based measurement for some time.

One of the reasons for moving away from the longer entry-cohort horizon was a recognized complication: the time horizons for the original benchmark turned out to be off, with measurement landing at 7, 9, and 11 years after entry rather than the intended 6, 8, and 10. That's a meaningful input into the decision, but the direction of travel was already set.

The net result: the 4-year completers-based figure is now the primary earnings lens consumers see, and the older entry-cohort measure hasn't been updated alongside it.

Intuitively, the two metrics aren't as far apart as they sound. If you assume a roughly 6-year graduation timeline, 4 years after graduating lands you in roughly the same place as 10 years after entering. They're viewing the same pathway from a different vantage point. Comparing the two data points we found that the correlation is roughly 0.90. They mostly agree.

But "mostly" is doing some work there.

4-year vs. 10-year earnings: what the College Scorecard 2026 update actually shows

Here's what the gap looks like across about ~1500 instutitons who predominately award bachelor degrees.

| Raw | Timing-adjusted | |

|---|---|---|

| Median gap (4yr minus 10yr) | +$4,869 | −$1,177 |

| Average gap | +$5,304 | — |

| P25 gap | +$1,228 | — |

| P75 gap | +$9,029 | — |

| Share where 4yr is higher | 82% (1,212 of 1,479) | 42% (614 of 1,479) |

To make sense of this change we have to think about three things working together:

Measurement timing. The 4yr data is newer and reflects a higher-wage environment. This is a roughly uniform upward push across all schools accounting for about half the median raw gap and it doesn't tell you anything about the schools themselves.

The 4yr data reflects 2022/2023 cohort data; the 10yr data is frozen at 2020/2021. That's roughly a 2.5-year gap in measurement — and private sector wages grew at about 4.5–5% per year over that period, according to the Bureau of Labor Statistics Employment Cost Index. Over 2.5 years, that compounds to roughly 11–12% nominal wage growth baked into the comparison before you've accounted for anything else. Strip that out, and the median institution actually flips: 4yr is no longer higher on average, and the share of schools where 4yr leads drops from 82% to 42%.

Who gets counted. The 4yr metric counts only completers who were earning income and not enrolled. Students who dropped out are excluded entirely. At schools with lower completion rates, this filter has a large effect: the 4yr figure describes a smaller, more selected group than the 10yr figure does.

Career trajectory. The 10 year metric included a lot of different scenarios. We've unpacked the time to graduation, and the lack of graduation but this also goes the other way. Someone who can complete in 4 years (or even 3) has a head start on their peers who take 6+ years to start earning. Focusing on the post completion metric takes that away entirely, which ends up impacting some of the highest earners.

Also, people who go to graduate school early may or may not show up in one metric versus another. In this case, if you are in graduate school at the time of measurement, you are excluded.

When you break institutions into quartiles by their 4yr/10yr ratio here's what we see.

| Quartile | Raw ratio | Timing-adj ratio | Avg grad rate | Avg part-time | Avg age 25+ |

|---|---|---|---|---|---|

| Q1 — 10yr favored | 0.95 | 0.85 | 65.2% | 5.3% | 7.2% |

| Q2 | 1.06 | 0.95 | 59.7% | 9.9% | 11.6% |

| Q3 | 1.13 | 1.01 | 56.4% | 11.2% | 13.7% |

| Q4 — 4yr favored | 1.28 | 1.15 | 47.8% | 16.9% | 22.1% |

After removing timing, Q2 flips entirely. Only Q3 and Q4 retain a genuine 4yr premium — and Q4 retains it clearly: more part-time enrollment, more students over 25, lower completion rates.

Also, if you look at the implied "growth rate" between 4yr and 10yr earnings across the income distribution, it appears that lower-earning schools are growing faster:

| Earnings quintile | Avg 10yr | Avg 4yr | Implied annual "growth" |

|---|---|---|---|

| Q1 — lowest | $38,338 | $45,073 | 6.4% |

| Q2 | $48,078 | $54,850 | 5.3% |

| Q3 | $54,208 | $59,763 | 3.9% |

| Q4 | $60,889 | $65,140 | 2.7% |

| Q5 — highest | $80,139 | $83,339 | 1.5% |

This isn't what's actually happening. The gradient is a completion filtering effect, not a wage trend. At a bottom-quintile school — average 10yr earnings around $38k, average graduation rate around 43% — the 10yr metric counts everyone who ever enrolled, including the roughly 57% who left without a degree and may be earning significantly less. The 4yr metric counts only the people who finished and are working. Those are two very different questions when completion is low: what does the average person who walked through the door end up earning? versus what does the average person who walked out with a credential and is working earn?

At a top-quintile school, with a 77% average graduation rate, those two populations are much closer to the same people. The small remaining gap is timing inflation plus the graduate school trajectory effect — students whose later-career earnings show up in the 10yr figure but who were still enrolled when the 4yr clock read.

You could think of the implied growth gradient as a rough proxy for completion filtering intensity: massive at the bottom of the distribution, minimal at the top. That's also why timing adjustment alone doesn't collapse the gap for low-earning schools — even after removing ~11% timing inflation, the bottom quartile still shows an adjusted ratio above 1.0. Completers at those schools genuinely do earn more than the full entrant cohort. The timing just amplifies an effect that was already there.

How we use earnings data

To account for both the noise in any single snapshot and the genuine variation across career arcs, College Azimuth builds a distributional earnings composite: we combine 10-year, 8-year, and 6-year earnings data, tracking both the P25–P75 spread and earnings growth trajectory. We do this because some careers start strong and plateau while others build slowly and compound over time. Looking at just one snapshot misses that dynamic.

We also do it because the data itself can be noisy — small cohort sizes, single-year IRS snapshots, and variation in how institutions are measured year to year can all create false signals. The more context you can give it, the better.

In each case, we standardize and apply a technique called winsorizing to limit the distortion caused by extreme outliers. We do this at the institution level, controlling by type and other factors, and again at the program level within institutions — so we can show where value is actually being created at the program level, not just the school level. The goal is to compare apples to apples, within the limits of what the data actually allows. A full description of how we build the composite and handle missing data is available on our methodology page.

One of the more disappointing things for me in this update was the lack of a P25/P75 spread. The median isn't a promise at any earnings horizon. The range of outcomes for completers still varies significantly, and anchoring to the middle without understanding the spread is a mistake as we've written about before.

As we said at the time the spread is at least as important as the median, and I hope it's something the Scorecard team adds in a future update. In it's place they added program "threshold" metrics which show you what the P25, P75 are for Program levels, which you can compare against the median earnings for the program (and the institution). This is interesting for it's own reasons, but losing the sense of variance within the metrics themselves makes them way less useful for actual decision making IMO. This shows a shift towards policy level heuristic thinking which I think is the downside of the College Scorecard, and I hope this gets added back in later.

So does the change matter?

That's the right question — and the answer depends on who you are and what you're trying to find out.

How to Think About It

For most schools, not much changes. A 0.90 correlation means the same institutions that looked strong on 10-year earnings still mostly look strong on 4-year earnings. If you've been using Scorecard data to get a rough sense of whether a school produces good outcomes, that signal is largely intact.

But the shift does change what the number is telling you — and there are two situations where that distinction is worth adjusting for.

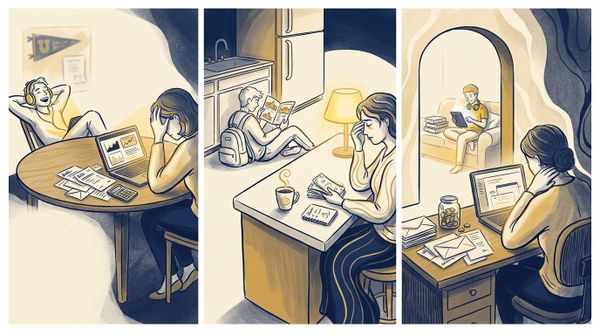

If you're a non-traditional student

If you're over 25, attending part-time, or returning to school to formalize credentials in a field you already work in, the 4yr figure is actually more relevant to your situation than the 10yr figure ever was. The 10yr entry-cohort metric was always measuring a blended population — completers and non-completers, traditional and non-traditional students together — which can obscure the value a specific type of student gets from a specific type of institution.

The table below shows schools where the 4yr figure runs significantly higher than 10yr. The pattern across all four isn't coincidental — these are schools serving older, often part-time students who are using a credential to accelerate in fields they're already working in. The 10yr figure dilutes that story by pulling in a broader entrant pool. The 4yr figure describes the people these schools are actually built for.

| School | 4yr | 10yr | Gap | Grad rate | Age 25+ | Part-time |

|---|---|---|---|---|---|---|

| Florida Institute of Technology | $90,403 | $43,137 | +$47,266 | 64.5% | 74.9% | 34.7% |

| Davenport University | $69,009 | $45,099 | +$23,910 | 53.2% | 32.5% | 40.5% |

| Webster University | $75,697 | $50,876 | +$24,821 | 62.7% | 20.7% | 14.1% |

| Purdue University Northwest | $73,337 | $48,318 | +$25,019 | 43.5% | 14.1% | 18.8% |

Florida Institute of Technology is the extreme case — nearly 75% of students are over 25, and the 4yr figure is more than double the 10yr. But Webster and Purdue NW show the same directional effect at more moderate enrollment profiles, which suggests this isn't just an outlier phenomenon. It's a structural pattern in how the two metrics treat non-traditional enrollment.

If that's your profile, the 4yr number is closer to your question.

If you're a traditional student heading toward graduate school

The inverse applies at schools with strong graduate school pipelines. The table below shows schools where 10yr runs significantly higher than 4yr — all of them traditional residential liberal arts colleges with high completion rates, minimal part-time enrollment, and very few students over 25.

| School | 4yr | 10yr | Gap | Grad rate | Age 25+ | Part-time |

|---|---|---|---|---|---|---|

| Reed College | $44,518 | $62,927 | −$18,409 | 73.8% | 1.1% | 0.6% |

| Bryn Mawr College | $57,476 | $75,217 | −$17,741 | 83.6% | 0.7% | 0.5% |

| Kenyon College | $57,786 | $71,830 | −$14,044 | 84.4% | 0.1% | 0.0% |

| Colorado College | $56,959 | $65,222 | −$8,263 | 86.8% | 0.2% | 1.4% |

| Oberlin College | $51,715 | $58,343 | −$6,628 | 80.2% | 0.3% | 0.6% |

The 4yr figures here aren't low because these schools underperform. They're low because the not-enrolled filter removes students who went straight from graduation into graduate programs, medical school, or law school — exactly the students most likely to show up at the higher end of long-run earnings (maybe). The 10yr figure captures where those careers eventually land. The 4yr figure catches them before they get there.

One additional nuance worth flagging: these earnings describe completers who received federal financial aid. At schools like Reed, Kenyon, and Oberlin, where a meaningful share of students receive no federal aid, the measured cohort may not fully represent the graduate population. The gap could look different across the full class.

If your path involves graduate school or a field with a longer career trajectory, it's worth considering alternative ways to evaluate program strength which are not just the 4 year metric.

What the metric shift changes for you informationally

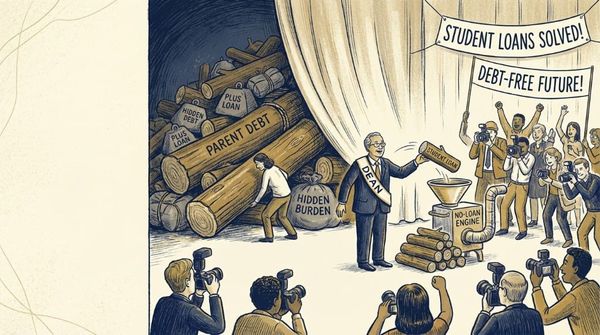

If the numbers are ~mostly the same then the differences themselves might not register, but I think it still important to think about them because the move to completers-only changes which risks are visible in the data.

The 10yr entry-cohort metric included everyone who enrolled. A school with a 43% graduation rate showed you a distribution that implicitly reflected what happens when things don't go as planned the students who left without a credential and ended up earning less than they'd hoped. That downside scenario is now out of the number.

The student most exposed to this gap is a first-generation student with limited financial margin enrolling at a school with a 45% graduation rate. They're now looking at an earnings figure that excludes the outcome of roughly half their future peers. The number isn't wrong it accurately describes what completers earn. But it says nothing about the risk that's most relevant to that student's actual decision.

The 4yr figure also compresses the upper end of the distribution in a specific way. Students who went straight to graduate school after completing their degree are filtered out by the not-enrolled condition and they're often the students who would show up at the higher end of long-run earnings. What you're left with is a cleaner read of the middle: conditional on finishing and working, what does a typical completer earn four years out? That's a genuinely useful number. But it's answering a narrower question than the entry-cohort figure was.

What to use alongside it

Because 4yr earnings are now a "conditional on completing" signal, the data you need alongside them becomes more important, not less:

Graduation rate. How realistic is that condition? A 4yr earnings figure at a school with a 45% completion rate describes a selected group — a dynamic we covered in depth when we looked at what graduation rates actually count. At a school with an 85% completion rate, 4yr earnings describe almost everyone who enrolls.

Debt picture. Earnings without repayment context are incomplete — but it's worth knowing that program-level cost data, which would let you pair earnings and debt at the major level, was submitted by institutions in October 2025 and hasn't been released yet. For now, institution-level average debt is the best available proxy, and it's a crude one at schools with wide variation across programs.

The metric change didn't create these gaps — you always needed the full picture, and our financial GPS framework is built around pulling exactly these signals together into a coherent decision. But the shift to completers-only makes the distance between what the earnings number shows and what you need to make a decision slightly larger, particularly for students at schools where not everyone finishes.

The metric you use shapes what you see. The more important question is whether you know what it's not showing you.

As I go through updating our database with these new metrics (and others) I'll share some notes here and in our changelog. If there is anything in particular you're interested in, please do not hesitate to reach out.